Welcome to Taxo Tape

TIM Datasheet Values vs. Real-World Performance: What Engineers Need to Verify

Introduction

The scenario is familiar to most power electronics engineers who have worked through a thermal design cycle. The thermal model, built around datasheet values from the selected TIM, predicts a junction temperature of 85°C at full load. Hardware testing returns 94°C. The difference is not explained by measurement error or model simplification — the TIM is simply not delivering the performance the datasheet implied it would.

This gap between datasheet values and real assembly performance is common enough to be expected rather than surprising. It has several contributing causes, and most of them are not the result of supplier misrepresentation. They are the result of engineers using datasheet numbers in ways the datasheets were not designed to support — treating typical values as guaranteed minimums, comparing numbers generated by different test methods, or applying laboratory-condition data directly to assembly conditions that differ significantly from the test setup.

Understanding where the gap comes from makes it manageable. This article covers the main sources of datasheet-to-reality divergence in TIM specifications — thermal conductivity measurement dependence, thermal resistance conditionality, dimensional tolerances, and the parameters that datasheets routinely omit — and explains how to use datasheet data correctly in thermal models and procurement decisions.

Why Datasheet Values Are Not Guaranteed Performance Numbers

Typical vs. minimum vs. specification values

The first thing to check on any TIM datasheet is what type of value is being reported. Three different value types appear in TIM datasheets, and they mean very different things for design purposes.

Typical values represent the average or central tendency of measured results from characterized samples. They are the most commonly reported value type in TIM datasheets and the most frequently misused. A typical thermal conductivity of 6.0 W/m·K means that the average of the supplier's characterized samples measured 6.0 W/m·K — it does not mean that every production pad will deliver 6.0 W/m·K, or that 6.0 W/m·K is the minimum you should expect.

Minimum values, where reported, define the lower bound that the supplier commits to. Designing to a minimum value rather than a typical value adds margin that accounts for the production variation that exists below the typical. Many TIM datasheets do not report minimums, which means the typical value is all you have — and using it as a design minimum overstates the guaranteed performance.

Specification values appear in some datasheets as the acceptance threshold for production release — the value that incoming inspection or production QC is measured against. These are more reliable as design inputs than typical values because they represent a committed threshold rather than an observed average.

When a datasheet reports only typical values without minimums or specification limits, build your own margin into the design. Using typical values directly as design inputs without margin treats average performance as guaranteed performance — which it is not.

Sample selection bias in reported values

Datasheet values are generated from characterized samples, and the samples selected for characterization are not always representative of the full production population. A supplier who measures thermal conductivity on carefully prepared, freshly produced samples under optimal laboratory conditions generates a different number than the same measurement conducted on randomly selected production samples across multiple batches.

This is not deliberate misrepresentation — it reflects the practical reality that characterization is often done during product development on optimized samples, while production variation is only fully understood after the product has been in production long enough to accumulate batch history. For established products from mature suppliers, the gap between characterization samples and production average is usually small. For newer products or smaller suppliers with limited production history, it can be larger.

The difference between a datasheet value and a production specification

A datasheet is a marketing and technical reference document. A production specification is a controlled document that defines acceptance criteria for manufactured product. These are not the same thing, and they are not always consistent with each other.

For critical applications, request the supplier's internal product specification — the document that defines what incoming inspection and production QC measures against — alongside the datasheet. If the supplier cannot provide this or does not have one, the datasheet value is the only reference for production quality, which means there is no documented commitment to delivering within any defined range of that value.

Thermal Conductivity: The Most Commonly Misread Parameter

Test method dependence

As covered in the thermal conductivity guide, the W/m·K value on a datasheet is meaningless without knowing how it was measured. ASTM D5470 measures thermal impedance across an actual interface under defined pressure and calculates conductivity from that measurement. Laser flash diffusivity measures bulk material conductivity with no interface, under no compressive load. Hot disk and transient plane source methods each produce their own characteristic results.

The same physical material, measured by these three methods, can produce thermal conductivity values that differ by 20 to 40%. A pad measuring 5.0 W/m·K by ASTM D5470 may measure 7.0 W/m·K by laser flash. Both numbers are technically accurate for what they measure. They are measuring different things, and only ASTM D5470 measures something close to what happens at the interface in your assembly.

When the test method is not stated on the datasheet — which is more common than it should be — the conductivity value cannot be used reliably in a thermal model or a cross-supplier comparison. Ask the supplier which method was used before using the number for any engineering purpose.

Pressure dependence within ASTM D5470

Even when two suppliers both use ASTM D5470, the reported values may not be directly comparable if the test pressure differs. Thermal conductivity of a compressible pad material increases with applied pressure because higher pressure reduces bond line thickness and improves surface contact. A pad measured at 50 psi will show higher conductivity than the same pad measured at 10 psi.

ASTM D5470 does not specify a single standard test pressure — it defines the method but leaves the pressure as a test parameter. Suppliers choose the pressure that produces the most favorable characterization result, which is not always the pressure closest to your assembly clamping conditions. A value measured at 100 psi applied to a design where actual clamping pressure is 20 psi overestimates real-world performance significantly.

When comparing across suppliers or using a conductivity value in a thermal model, confirm the test pressure and compare it against your actual assembly clamping pressure. If the gap is large, apply a correction factor or request test data at a pressure closer to your conditions.

Why the same material shows 20–30% variation depending on measurement

Beyond test method and pressure, several additional factors contribute to variation in reported conductivity values for nominally identical materials. Sample thickness affects the measurement — thinner samples have higher interface-to-bulk resistance ratios, which affects ASTM D5470 results. Surface finish of the test fixture contact blocks introduces variation. Sample-to-sample variation in filler loading within a batch produces real material variation that shows up in measurements.

The practical implication is that a 20–30% variation in reported conductivity between suppliers for nominally equivalent products does not necessarily mean one material is better than the other — it may reflect measurement condition differences rather than real performance differences. Hardware testing in your actual assembly is the only reliable method for resolving this ambiguity.

How to use conductivity values correctly in thermal models

Use conductivity values in thermal models as order-of-magnitude estimates to confirm that a material is in the right performance tier for your application — not as precise inputs that determine junction temperature to within a degree. Build in margin: if the model shows acceptable junction temperature using the datasheet conductivity value with no margin, the real assembly will likely run hotter.

For the thermal model input that most directly determines junction temperature, use thermal resistance at your working bond line thickness rather than bulk conductivity. If the supplier provides a thermal impedance curve across a range of thicknesses and pressures, use that data — it is closer to real assembly conditions than the bulk conductivity value.

Thermal Resistance: Closer to Reality but Still Conditional

Why thermal resistance is more useful than conductivity

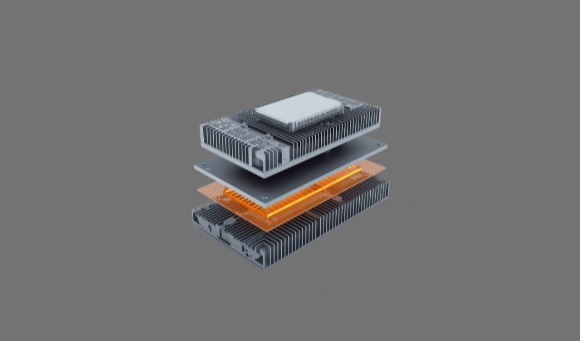

Thermal resistance — expressed as °C·cm²/W or K·cm²/W — integrates conductivity and thickness into a single value that directly represents interface performance at specified conditions. It is the parameter that appears in junction temperature calculations and is therefore the number that matters for design decisions.

For a pad of defined conductivity and thickness, thermal resistance is calculable from first principles. But reported thermal resistance values on datasheets carry their own set of conditions and assumptions that affect how closely they reflect real assembly performance.

What conditions the datasheet thermal resistance value assumes

A thermal resistance value reported on a TIM datasheet is measured under specific conditions: a defined compressive pressure, a specific bond line thickness (either nominal or compressed), a specific test fixture surface finish, and a controlled ambient temperature. These conditions are chosen to produce a representative measurement — but representative of a laboratory fixture, not your specific assembly.

The most significant assumption is surface finish. ASTM D5470 test fixtures use lapped metal surfaces with Ra values typically below 0.1 µm — smoother than most production heatsink surfaces. Contact resistance at the TIM-to-metal interface decreases as surface roughness decreases, which means the datasheet thermal resistance value reflects better surface contact than your assembly will achieve with a standard machined or extruded heatsink.

How your assembly conditions differ

Production heatsink surfaces range from Ra 0.4 µm for finish-machined aluminum to Ra 3.0 µm or higher for as-cast or as-extruded surfaces. This roughness range produces measurably higher contact resistance than the test fixture surface, which adds to the total interface resistance beyond the datasheet value.

Assembly clamping pressure in production varies with fastener torque tolerance — typically ±20 to 30% from nominal torque values under production conditions without torque-controlled tools. This pressure variation produces corresponding variation in bond line thickness and thermal resistance across the production population.

The combined effect of realistic surface finish and production clamping variation typically adds 10 to 25% to the datasheet thermal resistance value in real assemblies. For a tight thermal budget where the model shows marginal junction temperature headroom, this gap is the difference between a design that works in hardware and one that does not.

Contact resistance: the variable datasheets rarely capture

Contact resistance — the additional thermal resistance at the interface between the TIM surface and the mating metal surfaces — is determined by surface roughness, hardness of the TIM material, and applied pressure. It is distinct from the bulk thermal resistance of the pad material itself, and it is not captured in bulk conductivity measurements.

Some ASTM D5470 measurements inherently include contact resistance because they measure total impedance across the interface including the contact contribution. Others report only the bulk material contribution. The distinction is not always clear from the datasheet, and contact resistance can account for 20 to 40% of total interface resistance in assemblies with rough heatsink surfaces and moderate clamping pressure.

For assemblies using as-cast or as-extruded aluminum heatsinks, the contact resistance contribution is larger than for machined surfaces. Softer TIM materials that conform more readily to surface roughness reduce contact resistance and partially compensate for higher bulk thermal resistance — which is one reason a softer 4.0 W/m·K pad sometimes outperforms a stiffer 6.0 W/m·K pad in hardware despite the conductivity difference.

Calculating expected real-world thermal resistance

A practical approach for thermal model inputs is to take the datasheet thermal resistance value at your expected bond line thickness and apply a correction factor of 1.15 to 1.25 to account for realistic surface conditions and clamping variation. This adjusted value is a more conservative and more realistic design input than the raw datasheet number.

Validate the corrected value against hardware measurements in your actual assembly during qualification. If the measured value falls within the corrected range, the model is well-calibrated. If measured values are consistently above the corrected range, the surface finish or clamping conditions in your assembly are worse than the correction factor assumed — investigate and adjust accordingly.

Thickness and Dimensional Tolerances

What ±10% thickness tolerance actually means

A thermal pad specified at 1.0mm nominal thickness with ±10% tolerance covers a range from 0.9mm to 1.1mm. That 0.2mm total range sounds modest, but its effect on thermal resistance is not. For a material with 5.0 W/m·K conductivity, the difference in bulk thermal resistance between a 0.9mm and a 1.1mm pad is approximately 0.04 °C·cm²/W — small in absolute terms but meaningful in a tight thermal budget where the total TIM resistance is 0.20 °C·cm²/W.

More importantly, thickness tolerance is a production population characteristic, not a unit-level guarantee. Individual pads within a batch will fall somewhere within the tolerance band, and the distribution across a production run determines the spread in thermal resistance across your assembled units. A batch where most pads cluster near the upper end of the tolerance band — 1.08 to 1.10mm in the example above — produces a systematically higher thermal resistance than the nominal datasheet value predicts, without any individual pad being out of specification.

How thickness variation affects bond line thickness

Nominal pad thickness and bond line thickness are different quantities. BLT is the compressed thickness under assembly clamping conditions, which depends on both the initial pad thickness and the compression behavior of the material under the applied pressure. A pad that starts at 1.1mm and compresses 15% under assembly pressure produces a BLT of 0.935mm. A pad from the same batch that starts at 0.9mm and compresses the same 15% produces a BLT of 0.765mm. The BLT difference between these two units — both within specification — is 0.17mm, which produces a measurable difference in thermal resistance across the production population.

This is why thermal models built around a single nominal BLT value do not accurately represent production performance distribution. The correct approach is to model the worst-case BLT — derived from the upper end of the thickness tolerance combined with the lower end of the compression range — and verify that junction temperature remains acceptable at that condition.

Compression behavior vs. nominal thickness

The compression stress vs. thickness curve tells you more about real assembly BLT than the nominal thickness specification. A pad with a steep compression curve — one that resists compression strongly — produces less BLT reduction under a given clamping pressure and is therefore more sensitive to initial thickness variation. A pad with a flat compression curve accommodates thickness variation more effectively because small differences in initial thickness produce proportionally smaller differences in BLT under pressure.

When evaluating TIM options for assemblies with significant gap variation or wide fastener torque tolerance, check the compression curve shape alongside the nominal thickness and tolerance. A softer, more compressible pad often produces more consistent BLT across a production population than a stiffer pad with nominally tighter thickness tolerance.

Tolerance compounding across the production population

In a complete thermal stack — component package thickness tolerance, PCB thickness variation, heatsink flatness, TIM thickness tolerance, and fastener torque variation — each tolerance contributes to the total BLT distribution. The TIM thickness tolerance is one element in this stack, and its effect compounds with the others.

For a production run of 1000 units, the combined effect of all tolerances produces a distribution of BLT values and corresponding thermal resistance values. The units at the unfavorable end of this distribution run hotter than the nominal design prediction — and the margin between the worst-case unit and the component's thermal limit determines whether any field failures occur. Designing to the nominal BLT without accounting for tolerance compounding is one of the more common causes of field thermal failures that appear only in a subset of production units rather than universally.

Parameters That Datasheets Often Omit or Understate

Compression set

Compression set is the permanent thickness reduction that remains after sustained compressive load is removed. It is the parameter most relevant to long-term thermal interface stability in sealed, non-serviceable assemblies — and it is absent from the majority of commercial TIM datasheets.

The omission is not accidental. Compression set testing takes time — standard methods require 22 to 70 hours of sustained load at elevated temperature — and the results are not always favorable for commercial positioning. A pad that shows 20% compression set after 70 hours at 125°C will be 0.2mm thinner after extended service if it was originally 1.0mm, which directly increases BLT and thermal resistance over the product's service life.

For applications with service life targets of five years or more, request compression set data explicitly. If the supplier cannot provide it, either commission independent testing on samples or treat the long-term thermal resistance of that material as uncharacterized — which it effectively is.

Aging behavior at operating temperature

Polymer-matrix TIM materials change properties over time when held at elevated temperature. Silicone-based pads may experience changes in cross-link density that alter hardness and compressibility. Dispensed gap fillers may undergo partial volatilization of low-molecular-weight components that changes their mechanical and thermal properties. The direction and magnitude of these changes depend on the specific formulation and the operating temperature.

Standard datasheets report properties of fresh, unaged material. For sealed assemblies operating at sustained elevated temperature — a telecom rectifier module, an industrial inverter running continuous duty — the material properties at year five are more relevant to reliability than the properties at day one. Aged property data is rarely available from standard datasheets. For critical applications, request it or treat the material as uncharacterized for long-term performance.

Batch-to-batch variation

The single thermal conductivity value on a datasheet represents one measurement or an average of a small number of measurements from characterized samples. It says nothing about how much that value varies from batch to batch in production.

Batch-to-batch variation in thermal conductivity of ±10 to 15% is not unusual in commercial TIM production — it reflects filler loading variation, raw material lot differences, and process parameter drift over time. For a material with a nominal 6.0 W/m·K, this range means production batches may deliver anywhere from 5.1 to 6.9 W/m·K — a spread that produces meaningful junction temperature variation across the production population if the thermal budget is tight.

Batch test data requested at incoming inspection, as described in the supplier qualification guide, is the only way to quantify this variation for a specific supplier and product combination. Until you have batch data across multiple production lots, the batch-to-batch variation in your supply is unknown.

Surface finish sensitivity

Datasheet thermal resistance values are measured on lapped fixture surfaces with roughness below 0.1 µm. The sensitivity of the reported value to surface finish — how much the thermal resistance increases as heatsink surface roughness increases from the test fixture condition to your production condition — is not reported on standard datasheets.

This sensitivity varies significantly between TIM types. Soft, conformable pads accommodate surface roughness through elastic deformation and show relatively low sensitivity to surface finish variation. Harder pads with higher elastic modulus bridge surface peaks without filling the valleys, producing higher contact resistance on rough surfaces and stronger sensitivity to surface finish variation.

For assemblies using non-machined heatsink surfaces, surface finish sensitivity is a relevant selection parameter that standard datasheets do not address. The only reliable way to quantify it for a specific material and surface combination is hardware testing on representative heatsink samples.

How to Use Datasheet Values Correctly

Add appropriate margin to thermal model inputs

The practical starting point is to treat datasheet thermal conductivity and thermal resistance values as optimistic estimates rather than conservative design inputs. For thermal models used to verify junction temperature headroom, apply a correction factor of 1.15 to 1.25 to the datasheet thermal resistance at nominal BLT. This range accounts for the typical gap between laboratory test conditions and production assembly conditions — surface finish, clamping variation, and contact resistance contributions that the datasheet does not capture.

If the thermal model shows adequate junction temperature margin after applying this correction, the design has reasonable robustness to real-world assembly variation. If it only passes without the correction, the design is relying on datasheet best-case performance and will likely show thermal margin problems in hardware.

Which parameters to verify by measurement in your assembly

Not every datasheet parameter requires independent verification in hardware. Some parameters — dimensions, hardness, electrical insulation — can be verified by incoming inspection. Others require assembly-level measurement.

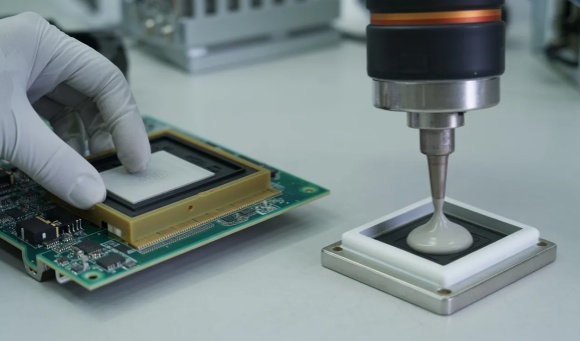

Thermal resistance must be verified in your actual assembly under your actual conditions. No amount of datasheet analysis substitutes for this measurement. Measure junction temperature or case-to-heatsink temperature difference in hardware under controlled power dissipation, and compare against your thermal model prediction using the corrected datasheet value. If the measured value falls within the expected range, the model is well-calibrated and the material is performing as expected. If it falls outside the range, investigate the specific source of divergence before proceeding to production.

BLT under your assembly conditions should be measured on a sample basis during qualification — not calculated from nominal pad thickness. Measure the actual gap before pad installation, then measure or calculate BLT after assembly from known component and heatsink dimensions. This establishes whether the pad is compressing to the expected thickness under your actual clamping conditions.

When to request additional characterization beyond the standard datasheet

For sealed, long-life applications: request compression set data and aged mechanical properties if not in the datasheet. For assemblies with rough heatsink surfaces: request thermal resistance data measured at a surface finish closer to your actual condition, or test hardware samples with your specific heatsink surface. For cross-supplier comparison: request test method and pressure confirmation before comparing any conductivity or thermal resistance values.

For high-volume production where TIM performance is a significant contributor to product reliability: request batch test data from multiple production lots before qualification sign-off, as described in the supplier qualification guide. The batch data quantifies the production variation that the datasheet does not capture.

Building a verification protocol

The standard verification protocol for a new TIM specification should include three steps conducted in sequence before production commitment. First, datasheet review with correction factor applied to thermal model — confirm that the design passes with realistic inputs, not best-case inputs. Second, first article inspection of samples for dimensional and mechanical properties against specified tolerances. Third, thermal resistance measurement in hardware under production-representative conditions on a sample of five or more units.

This protocol adds one to two weeks to the qualification timeline and eliminates the most common sources of datasheet-to-reality divergence before they affect production. For assemblies where a thermal management problem in the field would require rework or return, this investment is straightforward to justify.

Conclusion

Datasheet values for thermal interface materials are useful starting points — they define the performance tier of a material, support initial supplier comparison, and provide inputs for preliminary thermal models. They are not guarantees of what will happen at the interface in your assembly, and treating them as such is the most reliable path to a thermal design that passes simulation and fails in hardware.

The gap between datasheet and reality is not random. It comes from predictable sources: test method and pressure dependence in conductivity values, surface finish and contact resistance contributions not captured in reported thermal resistance, thickness tolerance effects on BLT distribution, and long-term parameters — compression set, aging behavior, batch variation — that standard datasheets routinely omit. Understanding these sources makes the gap manageable: apply appropriate correction factors in thermal models, verify in hardware before production commitment, and request the data that datasheets do not volunteer.

For related guidance on reading thermal conductivity values and test methods, see the thermal conductivity guide. For the supplier qualification process that translates hardware verification into a production release decision, see the supplier qualification guide.

If you need material samples for hardware verification or additional characterization data beyond our standard datasheet for a specific application, contact us with your requirements.